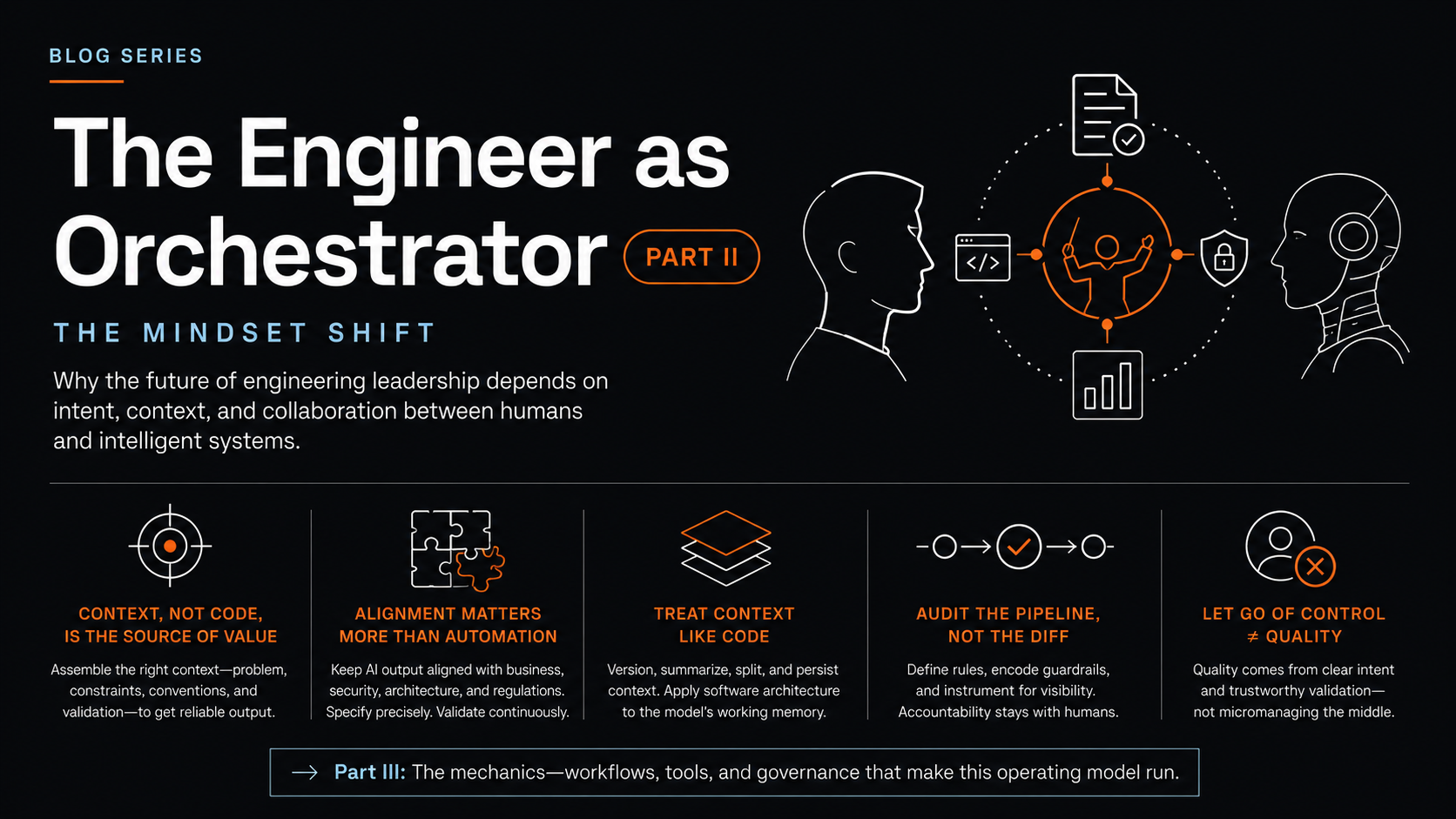

The Engineer as Orchestrator, Part II The Mindset Shift

Why the future of engineering leadership depends on intent, context, and collaboration between humans and intelligent systems.

The tools showed up faster than the thinking. Most teams adopting AI coding assistants are still operating on assumptions formed when humans were the only ones writing code, which is why their results plateau within a quarter or two. The mindset shift required to lead engineering work in this environment is genuinely uncomfortable, because it asks senior people to give up the parts of the job that made them senior in the first place.

Context, not code, is the source of value

The differentiator used to be deep familiarity with frameworks, design patterns, and the texture of a given language or runtime. That knowledge still matters, but it has been demoted. The new differentiator is the ability to assemble the right context for an AI system to produce reliable output, which includes the problem statement, the constraints, the relevant code, the implicit conventions of the codebase, and the validation criteria that determine when the work is actually done.

This is harder than it sounds. Models simulate understanding based on what gets put in front of them, so when the context is incomplete or contradictory, the output degrades in ways that are often subtle enough to ship. Engineers who got senior by writing fast and well now have to slow down at the front of the work, articulate what they previously held in their heads, and make their intent legible to a system that has no shared history with the team.

Alignment matters more than automation

There is a temptation to measure progress by how much code the agents produce without human intervention. That measure is misleading. An agent that ships fifty pull requests a week while drifting away from the team's architectural direction is producing technical debt at scale, not productivity.

The job is to keep the AI's output aligned with business objectives, security posture, architectural integrity, and whatever regulatory environment the work sits inside. Practically this means writing specifications precise enough to be machine-readable rather than aspirational paragraphs that read well in a planning doc, and running validation continuously rather than waiting for the end of a long agent run to catch problems that have already compounded across steps. It also means being deliberate about what the agent looks at and, more importantly, what it does not, because context discipline matters more than raw context size.

Treat context like code

Context windows are a hard limit on what a model can reason about in a single pass. Stuff too much in and the model loses focus on what matters. Strip too much out and it fills the gaps with plausible-looking inventions. Reliability comes from curating what the model sees, when it sees it, and what it carries forward.

In practice this means versioning your prompts and your specs the same way you version code, summarizing long-running work so the relevant history survives compression, splitting tasks across specialized agents when a single context cannot hold the full problem, and persisting intermediate reasoning so the next run can pick up where the last one left off. It is software architecture applied to the working memory of an intelligence, and it deserves the same rigor as the rest of the system.

Audit the pipeline, not the diff

When agents generate, test, and merge code at machine speed, manual code review stops being the primary control. The useful question shifts from whether a given diff is correct to whether the pipeline that produced it is trustworthy. That changes where engineering leaders spend their attention.

The work becomes defining the rules the agents must obey, encoding those rules into the workflow so violations cannot ship, and instrumenting the system to make agent decisions observable rather than opaque. Accountability still lives with humans. The visibility layer is what has to change, because reviewing every output line by line was already failing at human scale and is impossible at agent scale.

The leadership instinct that needs to die

The instinct worth letting go of is the one that conflates control with quality. Tight control over how every line gets written produced quality when the bottleneck was human typing speed and human carefulness. The bottleneck has moved. Quality now comes from clarity of intent at the top of the work and trustworthy validation underneath it, and engineering leaders who keep micromanaging the middle will find their teams quietly working around them.

Part III gets into the mechanics: the workflow changes, the tooling decisions, and the governance scaffolding that make this operating model actually run.

Jehad Alkhateeb

AI & Digital Experience Architect with 11+ years of experience building intelligent systems and leading engineering teams. Based in Toronto, Canada.

Related Articles

The Engineer as Orchestrator, Part III – The Practical Path to Modern Software Development

How engineering organizations operationalize AI-augmented development, build the tooling and processes, and deliver at scale.

The Future of Software Engineering, Part I: From Builder to Orchestrator

Why AI coding agents mark a deeper shift in how we design, deliver, and define software itself.