AI Governance Part 5: The Operational Layer of Enterprise AI Governance

Part 5 of a series on enterprise AI governance. Skills as the control plane, phased rollout, audit cadences, incident response, and what the framework adds up to.

Part 5 of a series on enterprise AI governance.

The infrastructure layer determines what is possible. The operational layer determines what actually happens. Four components.

Skills as the control plane

Free-form prompting puts the burden of correctness, safety, and compliance on every user, every time. That model does not scale, produces no shared auditable record, and puts regulatory exposure in the hands of whoever is least careful about what they type.

Move governance from the prompt layer to the capability layer. A skill (Claude Skills, custom GPTs, vendor-specific assistant, same idea) is a versioned, tested, approved unit that users invoke rather than reconstruct. A marketing user does not type a prompt to create a brief in Sitecore. They invoke "Create Marketing Brief," which encapsulates the prompt, guardrails, tool access, and expected behavior. The skill is the unit of governance.

Skills make governance proportional to risk. Substantive review when a new capability is introduced. Zero review when an approved capability is invoked for normal work. That absence is what makes the program operate at scale.

The OWASP Agentic Skills Top 10, published early 2026, was the first standards artifact to recognize skills as a distinct security concern. AST10 documents real incidents including supply-chain poisoning of skill registries and CVE-level vulnerabilities in skill execution paths.

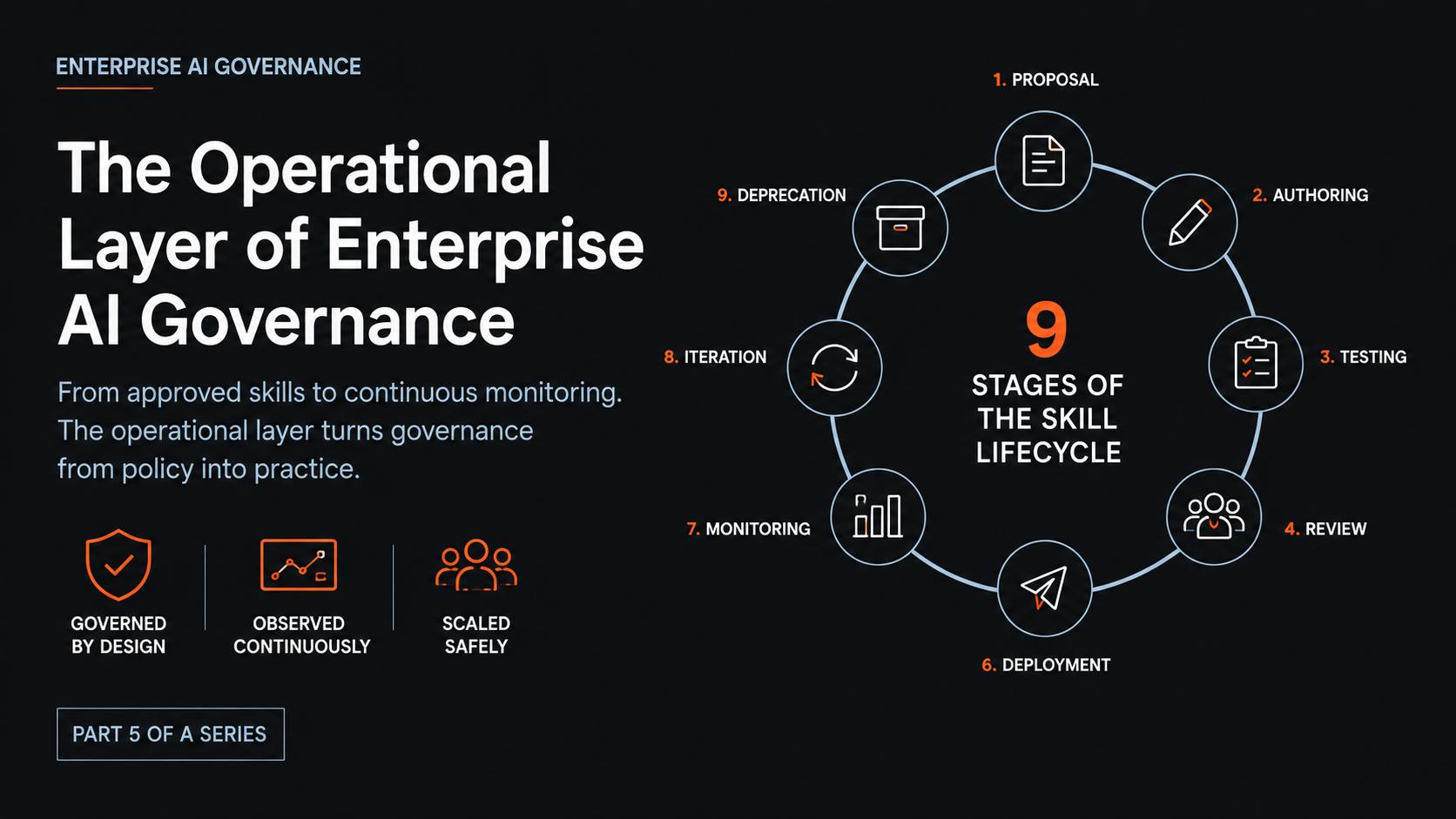

The nine-stage lifecycle

Every skill goes through nine stages. Owners, artifacts, and exit criteria defined for each. No skill reaches users without passing every gate.

Proposal. Function lead writes a one-page document: problem solved, target users, MCPs and tools required, data sensitivity, estimated invocation frequency, risk assessment. Exit: AI Governance Board accepts for development.

Authoring. Technical author writes SKILL.md: purpose, activation triggers, inputs, outputs, tools used, guardrails, five golden examples, data handling, owner. Exit: peer-reviewed and ready for testing.

Testing. Functional tests (golden examples plus 5 to 10 edge cases) and adversarial tests (prompt injection, scope escape, tool misuse, data exfiltration). Pass threshold defined ahead of time, typically 95% for regulated use. Exit: threshold met, no critical adversarial findings.

Review. Governance board (Security, Compliance, function lead, AI lead) reviews SKILL.md, test results, adversarial findings, data flow diagram, rollback plan. Exit: board approves, requests changes, or rejects.

Approval. Board chair signs the record. Registry updated to "approved, pending deployment." Exit: signed record in registry.

Deployment. Skills API push to specific groups (never org-wide by default). Users notified with documentation and example invocations. Exit: visible to target users, registry shows "active."

Monitoring. OTEL metrics on invocation count, success/failure rate, execution time, token consumption, tool call volume. Review triggers: invocation drop (usability), failure rate over 5% (malfunction), tool call volume 2x expected (runaway pattern), user escalation. Exit: operating within expected envelope.

Iteration. Issues produce a new version that re-enters Testing. Old version active during validation window, then deprecated. Exit: new version live.

Deprecation. 30-day notice. Migration guide. Removal from active groups via Skills API. Audit trail retained per regulation. Exit: skill no longer invokable, archive record retained.

Skills without a documented rollback plan do not ship. Hard rule.

Phased rollout

Five phases, roughly six months from foundation to first users at scale. Each phase has observable success criteria gating the next. Phases overlap; they are gates rather than calendar dates.

Phase 1: Foundation (weeks 1 to 4). Activate the AI platform with SSO, SCIM, domain capture, IP allowlisting. Enable compliance API, daily export to SIEM. Audit and tighten upstream system roles. Create platform projects per function.

Phase 2: Observability (weeks 5 to 8). Configure OTEL endpoint, stand up collector to SIEM. Decide prompt and parameter capture level. Establish baseline metrics for tool call frequency, error rates, connection failures.

Phase 3: Governance (weeks 9 to 12). Stand up skill registry and approval workflow. Author 3 to 5 pilot skills for highest-value, lowest-risk use cases. Build security and compliance review checklist. Document rollback procedure.

Phase 4: Pilot rollout (weeks 13 to 16). First users come online. Marketing pilot: small cohort, Sitecore MCP, read-only, monitored four weeks. Design pilot: small cohort, Figma MCP, read-only, monitored four weeks. Daily review of OTEL and Compliance API events. Policies adjusted based on observed behavior. Scope expanded only after baseline is established.

Phase 5: Advanced control (weeks 17+). Write access granted to pilot cohorts after the four-week window. MCP Gateway deployed if required. SIEM detection rules for anomalous MCP usage. Formal incident response playbook. Expand to additional teams and additional MCPs using the same pattern.

Industry maturity literature describes five levels (Initial, Repeatable, Defined, Managed, Optimizing). The phased rollout above takes a program from Level 1 to roughly Level 3 in six months. Levels 4 and 5 are the longer game; typical industry timeline is 18 to 36 months with sustained executive support.

Audit cadences

Continuous audit, on cadences matching what is being audited.

Cadence | Activity | Owner |

|---|---|---|

Daily | Compliance API events, OTEL stream, vendor audit logs ingested to SIEM. Errors and unusual tool calls flagged. | AI Operations |

Weekly | Audit summary review: user activity, skill invocations, MCP errors. Anomalies flagged for follow-up. | AI Operations + Security |

Monthly | Compliance report. Every agent action traces to identified user, approved skill, and policy decision. Rollback procedure tested on a non-production skill. Registry reviewed for stale skills. | Compliance |

Quarterly | Independent skill catalog review. MCP scope reassessment. Vendor risk reassessment for AI platform and major MCP vendors. | AI Governance Board |

The monthly compliance report is the linchpin. If you cannot produce it from the SIEM in under a working week, the infrastructure has a gap. If you can produce it, you have an audit-ready artifact saying what the AI program did in the reporting period and how each action traces to authorized behavior.

This cadence pattern maps to NIST AI RMF MANAGE outcomes for incident response and continuous improvement, and to ISO/IEC 42001 clauses 9 (Performance Evaluation) and 10 (Improvement).

Incident response

Three severity levels. Defined rollback targets. Documented post-incident review.

Severity 1. Regulatory breach or security incident caused by an AI capability. Immediate rollback. Full incident review. Board notified. Target: under 30 minutes from detection to rollback. Target: user notification under one hour.

Severity 2. Incorrect output affecting business decisions. Rollback within four hours. Root cause analysis required. Eval suite for affected skill expanded to cover the failure mode.

Severity 3. Degraded usability without business impact. Iteration cycle. No rollback required.

Rollback itself is straightforward when the infrastructure is in place: AI Operations removes the skill from active groups via Skills API. Previous version restored if available. This is fast because every approved skill has a documented rollback plan that was reviewed during approval.

Post-incident: documented root cause analysis in the registry. Eval suite updated to cover the failure mode. Governance board reviews policy changes. If a new class of failure emerged, the threat model updates and relevant existing skills re-test against the new category.

What this series adds up to

Five posts. The argument is the same across all of them.

Enterprise AI is doing things on your behalf now. The control model that worked for the chat era will not hold for the action era. The standards (NIST AI RMF, ISO 42001) exist and give you a defensible starting position. The threat frameworks (OWASP LLM Top 10, OWASP Agentic Top 10, MITRE ATLAS) exist and give you usable vocabulary. The operational patterns (skills lifecycle, phased rollout, audit cadences, incident response) exist and are no longer experimental.

What is missing in most organizations is execution.

The organizations that close that gap in the next twelve to eighteen months will deploy AI faster than the ones that do not, because governance is what gives leadership the confidence to expand scope. The organizations that do not close it will spend the same period either deploying nothing while waiting for clarity that is not coming, or producing incidents that justify cautious organizations' caution.

Closing the gap is the work. The framework is how.

Sources & References

Skills and capabilities layer

OWASP Agentic Skills Top 10 (AST10): https://owasp.org/www-project-agentic-skills-top-10/

Claude Skills documentation: https://docs.claude.com/en/docs/build-with-claude/skills

Maturity and rollout

Liminal enterprise AI governance implementation guide (5-level maturity model, 18 to 36 month progression): https://www.liminal.ai/blog/enterprise-ai-governance-guide

Microsoft Inside Track on enterprise AI maturity in five steps: https://www.microsoft.com/insidetrack/blog/enterprise-ai-maturity-in-five-steps-our-guide-for-it-leaders/

Dataversity on AI governance maturity framework: https://www.dataversity.net/articles/building-a-practical-framework-for-ai-governance-maturity-in-the-enterprise/

Audit and operational standards

NIST AI RMF (MANAGE function outcomes, incident response): https://www.nist.gov/itl/ai-risk-management-framework

ISO/IEC 42001 (clauses 9 and 10, Performance Evaluation and Improvement): https://www.iso.org/standard/42001

Databricks practical AI governance framework: https://www.databricks.com/blog/practical-ai-governance-framework-enterprises

Threat frameworks (recap from series)

OWASP Top 10 for LLM Applications: https://genai.owasp.org/llm-top-10/

OWASP Top 10 for Agentic Applications (2026): https://genai.owasp.org/resource/owasp-top-10-for-agentic-applications-for-2026/

MITRE ATLAS: https://atlas.mitre.org/

Errico et al., "Securing the Model Context Protocol": https://arxiv.org/abs/2511.20920

Jehad Alkhateeb

AI & Digital Experience Architect with 11+ years of experience building intelligent systems and leading engineering teams. Based in Toronto, Canada.

Related Articles

AI Governance Part 4: The Infrastructure Layer of Enterprise AI Governance

Part 4 of a series on enterprise AI governance. Identity, three-layer observability, user grouping, and policy enforcement that produces a defensible audit trail.

AI Governance Part 3: Threat Modeling AI Agents in the Enterprise

Part 3 of a series on enterprise AI governance. Threat modeling using OWASP LLM Top 10, OWASP Agentic Top 10, MITRE ATLAS, and MCP-specific defense patterns to model real risk.