AI Governance Part 4: The Infrastructure Layer of Enterprise AI Governance

Part 4 of a series on enterprise AI governance. Identity, three-layer observability, user grouping, and policy enforcement that produces a defensible audit trail.

Part 4 of a series on enterprise AI governance.

Infrastructure determines whether the operational layer can do its job. Get it wrong and no policy fixes it. Four components consistently matter.

Identity and access

Standard enterprise hygiene first. SSO and SCIM from the corporate identity provider so user lifecycle is automatic and synchronized. Domain capture so personal accounts on your email domain stay under enterprise management. IP allowlisting on corporate networks to reduce the blast radius of credential compromise. None of this is new. Skipping any of it makes the AI-specific work substantially harder.

The AI-specific question is what authority the agent inherits when acting on a user's behalf. In current MCP implementations and most tool-use standards, the agent's authority is the user's OAuth scope in the upstream system. Marketing user with broad Sitecore write access? The agent gets it too. Engineer with admin GitHub permissions? The agent gets those. The Kiteworks analysis of the MCP enterprise governance problem documents four structural gaps in default implementations: access control, audit trail completeness, per-user authorization, and compliance documentation.

Three reinforcing layers of access control:

IdP groups are the source of truth for business function membership.

Platform RBAC restricts which MCPs and skills each group sees in the AI platform.

Upstream system roles in Sitecore, Figma, and elsewhere enforce least privilege at the source. An agent inherits the user's permissions, so the upstream role is the de facto access control for agent actions.

Add a fourth when sensitivity demands it: tool-level allow and deny lists at an MCP Gateway. Defense in depth. A misconfiguration at one layer is caught at the next.

The discipline that closes the loop is adding an AI access review to the standard access governance cycle. The question is no longer just "should this user have this permission for their own work." It is also "should an autonomous agent acting on this user's behalf have this permission in its tool set." Sometimes the same answer, sometimes not.

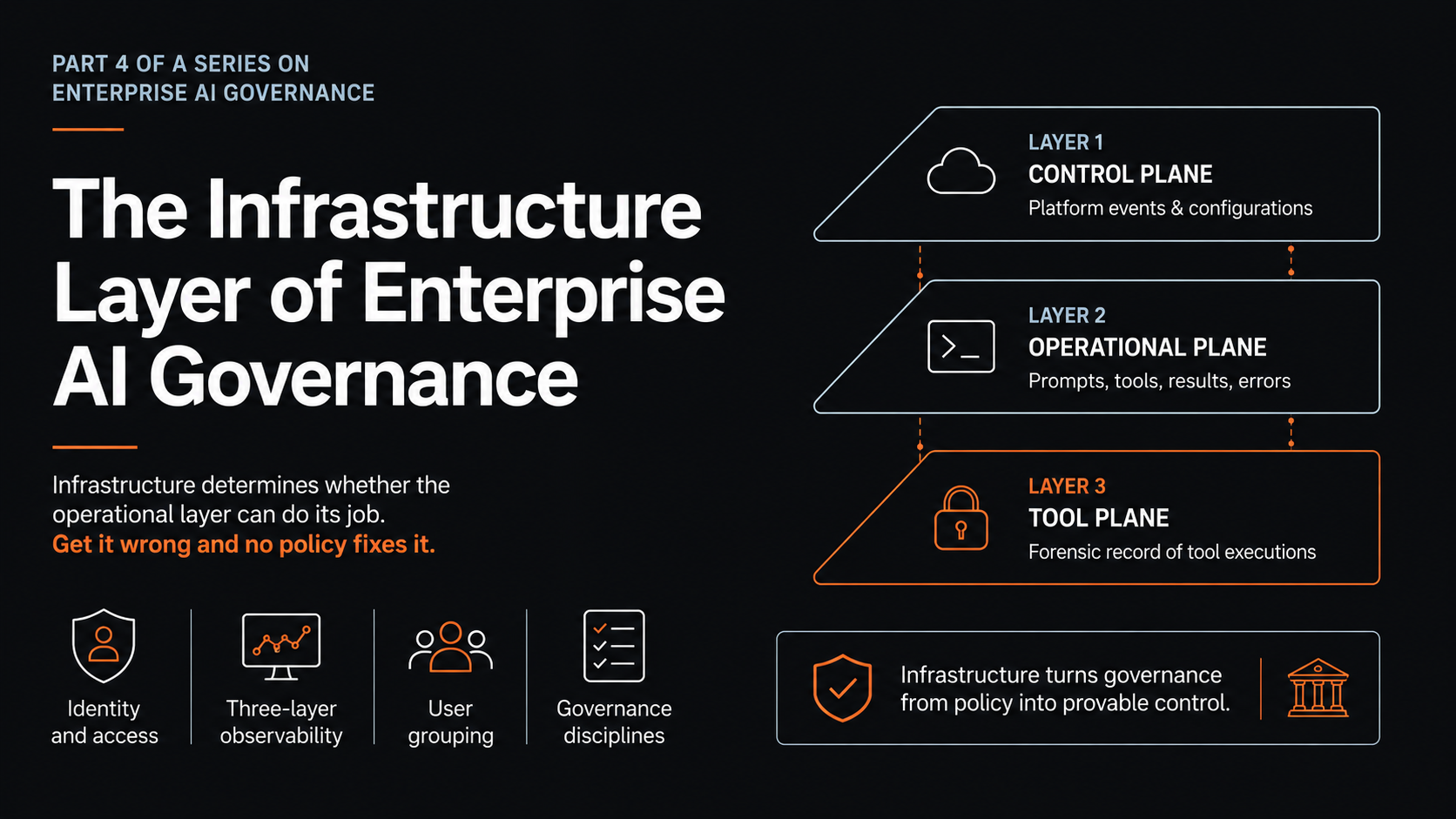

Three-layer observability

This is where most enterprise deployments fail an audit. No single telemetry source covers the full picture. You need three layers feeding the same SIEM, correlated on session identifier.

Layer 1: Control Plane. The compliance API from your AI platform vendor (Anthropic's Compliance API is the reference example). Captures roughly 30 platform-level event types: login and logout, SCIM provisioning, project creation, file uploads, skill uploads and deletions, role assignments, API key lifecycle, workspace configuration changes. Answers: who logged in, who created what, who configured what. Daily export to SIEM. Necessary. Not sufficient.

Layer 2: Operational Plane. OpenTelemetry export from the AI platform. Captures user prompts, MCP server connections, tool calls including name and parameters, tool results, file access, errors. Answers: what the agent attempted to do, what tools it called, what came back, what failed. Configure the OTEL endpoint centrally in the admin console; stream through a collector to SIEM. Two switches matter: full tool detail (always on for forensic value), full prompt content (data classification decision). Most regulated orgs capture full prompts for compliance-sensitive functions, metadata only for general use.

Layer 3: Tool Plane. MCP Gateway between AI clients and remote MCPs. Holds OAuth credentials. Produces complete forensic record of every tool invocation including trace ID, user attribution, full parameters, full response, and the policy decisions the gateway made. Answers: exactly what reached the downstream system, exactly what came back. For high-sensitivity deployments, non-optional. Constraint: gateway interception works for desktop and programmatic clients where the MCP endpoint is configurable. Web clients connect directly to vendor MCPs and cannot be gateway-routed. For web users, the forensic layer is partially replaced by vendor-native audit logs from the downstream systems, correlated in SIEM.

A defensible audit answer joins all three on session ID: "On this date, user X had session S. Within session S, the agent decided to call tool T with parameters P. Tool T executed against downstream system D and returned R. Action A was the policy decision that allowed it." That is the answer regulators ask for. Producing it is the infrastructure work.

The NIST RMF describes this requirement abstractly under MEASURE 2 outcomes. The Securing MCP paper calls it provenance tracking across agent workflows. Same operational reality.

User grouping

Three groups cover most initial regulated rollouts. Define them in the IdP. Sync via SCIM. Drive RBAC automatically.

Marketing. Sitecore MCP. Marketing skills. Read-only at pilot.

Design. Figma MCP. Design skills. Read-only at pilot.

Engineering. Figma and Sitecore MCPs. Engineering skills plus Claude Code or equivalent. Broader scope; power users.

Two operating rules:

No capability deployed org-wide by default. New skills and new MCP integrations deploy to specific groups, with explicit reasoning. Broader deployment requires explicit decision.

Read-only at first. Every pilot starts with read access only. Write access granted only after a four-week monitoring window in which OTEL data confirms the agent is behaving within expected patterns. This single rule prevents the most common failure mode: enthusiastic early deployments writing to production before operators can see what the agent is doing. Veeam's MCP guidance explicitly recommends read-only intelligence as the default posture, expanding only as governance, approvals, and monitoring mature.

Maps to ISO 42001 Annex A access management controls and to the NIST RMF MAP function (contextual scoping based on operational environment).

Policy enforcement at the tool plane

The control that does not exist natively in MCP servers, so it has to be added through gateway architecture.

Even when an agent has been granted tool access through the upstream role, you may want additional constraints on how the tool can be used. Read access to a customer database is different from the ability to retrieve every record in one query. Write access to a CMS is different from the ability to delete published content in production. A gateway encodes these distinctions as policy rather than relying on the upstream system's native role model.

Gateway can implement: "this tool allowed only with parameters in this range," "this tool allowed only during business hours," "this tool requires second user approval before forwarding," rate limiting per user, anomaly detection on tool call patterns, data loss prevention on agent traffic.

Deploy a gateway when the user population includes desktop clients (web only is partial coverage), when sensitivity demands forensic-grade audit, and when the regulatory environment requires it. Skip it for low-sensitivity contexts where vendor-native audit is sufficient. Most mature programs land in the middle, with gateways for high-sensitivity populations and high-sensitivity MCPs.

The audit test

A useful test for whether the infrastructure is in place: can you answer these from your SIEM in under an hour without a forensic effort?

For any user: list of AI sessions in a defined time window, including tools called, parameters, data accessed.

For any MCP integration: list of users who accessed it, tool call volume per user.

For any anomalous event detected: traceable back to specific user, session, decision.

For any approved skill: invocation history, who used it, when, with what outcome.

For any platform configuration change: responsible user, timestamp, before-and-after state.

If yes to all, the infrastructure is doing its job. If no, the gap is the work still ahead.

Next post: the operational layer that sits on this infrastructure. Skills, lifecycle, phased rollout, audit cadence, incident response.

Sources & References

Standards and frameworks

NIST AI Risk Management Framework (MEASURE function and outcomes): https://www.nist.gov/itl/ai-risk-management-framework

ISO/IEC 42001:2023 (Annex A technical controls, access management): https://www.iso.org/standard/42001

Observability and platform

Anthropic Claude Admin API and Compliance API documentation: https://docs.claude.com/en/docs/build-with-claude/admin-api

OpenTelemetry: https://opentelemetry.io/

Red Hat on MCP authorization complexity in enterprise: https://www.redhat.com/en/blog/model-context-protocol-mcp-understanding-security-risks-and-controls

MCP enterprise governance

Errico et al., "Securing the Model Context Protocol" (provenance tracking, gateway architecture, five-layer defense): https://arxiv.org/abs/2511.20920

Kiteworks on MCP enterprise security gaps: https://www.kiteworks.com/cybersecurity-risk-management/model-context-protocol-enterprise-security/

Veeam MCP security risks guide (read-only posture recommendation): https://www.veeam.com/blog/model-context-protocol-security-risks.html

MCP Security Best Practices (official spec): https://modelcontextprotocol.io/specification/draft/basic/security_best_practices

Identity and least privilege

Pillar Security on MCP credential aggregation risks: https://www.pillar.security/blog/the-security-risks-of-model-context-protocol-mcp

CoSAI Workstream 4: MCP shadow servers and authorization bypass: https://github.com/cosai-oasis/ws4-secure-design-agentic-systems/blob/main/model-context-protocol-security.md

Jehad Alkhateeb

AI & Digital Experience Architect with 11+ years of experience building intelligent systems and leading engineering teams. Based in Toronto, Canada.

Related Articles

AI Governance Part 3: Threat Modeling AI Agents in the Enterprise

Part 3 of a series on enterprise AI governance. Threat modeling using OWASP LLM Top 10, OWASP Agentic Top 10, MITRE ATLAS, and MCP-specific defense patterns to model real risk.

AI Governance Part 5: The Operational Layer of Enterprise AI Governance

Part 5 of a series on enterprise AI governance. Skills as the control plane, phased rollout, audit cadences, incident response, and what the framework adds up to.