The DORA AI Capabilities Model: Building the Foundation for AI-Driven Performance

A data-backed framework for scaling AI’s impact across people, platforms, and process.

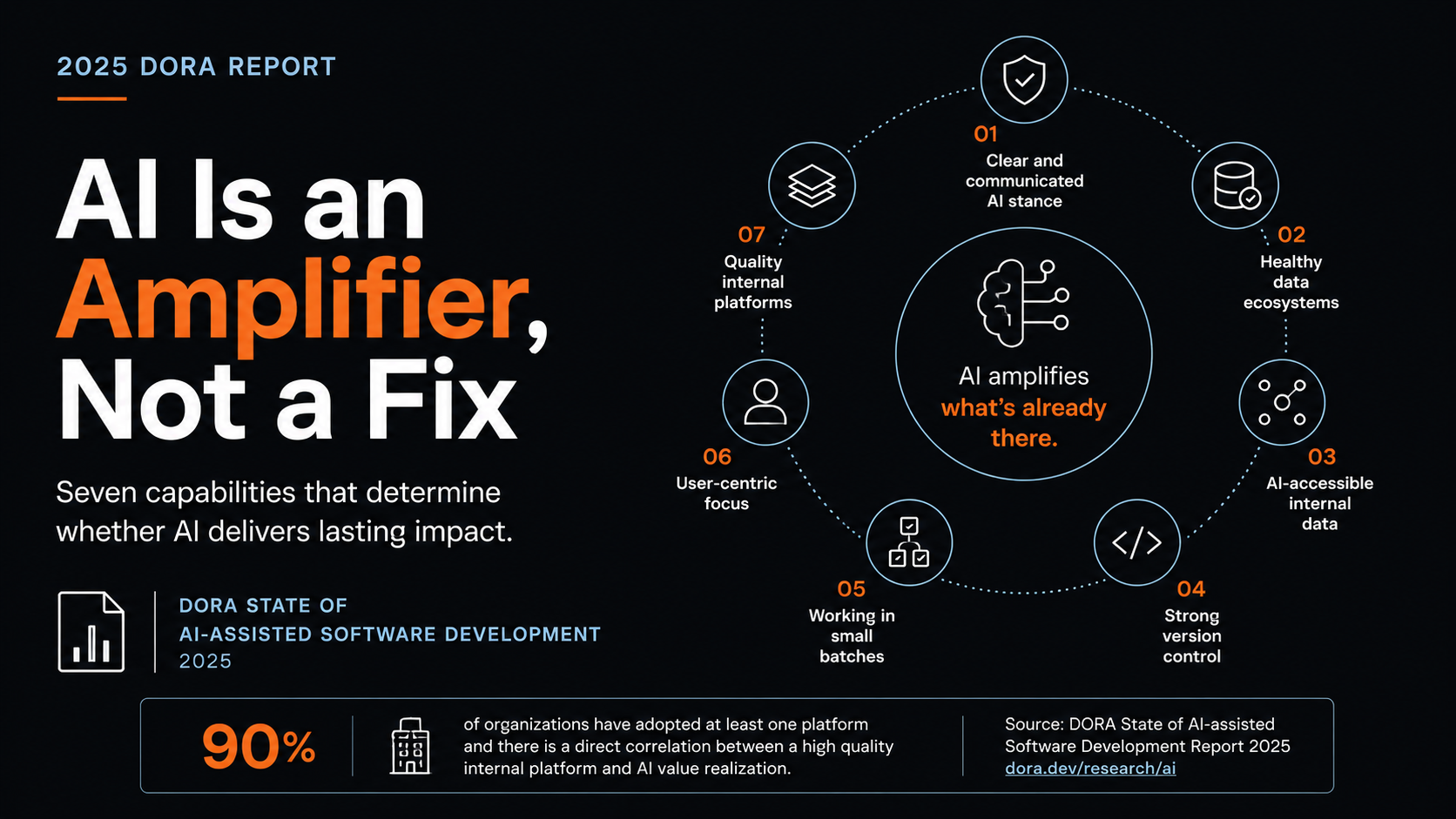

The 2025 DORA State of AI-assisted Software Development report, drawing on insights from over 100 hours of qualitative data and survey responses from nearly 5,000 technology professionals, lands on a finding that should reshape how technology leaders fund AI work. AI does not fix a team; it amplifies what is already there. High-performing organizations get a real accelerator. Struggling ones get faster chaos. The implication is uncomfortable for anyone whose AI strategy starts and ends with procurement, because tool adoption without foundational investment tends to produce localized productivity gains that downstream dysfunction reliably erases.

The companion DORA AI Capabilities Model formalizes this with seven capabilities that substantially amplify the benefits of AI, identified through 78 in-depth interviews, existing literature, and subject-matter expert input before validation against the broader survey population. What the seven share is a structural quality. Each one describes the system AI operates inside, not the AI itself. Reading them as a procurement checklist misses the point. Reading them as a funding map for the next two years gets closer.

Policy clarity precedes adoption

The first capability is a clear and communicated AI stance, which sounds soft until you look at what it actually moves. When developers understand what is permitted, what is encouraged, and where the boundaries sit, AI's effect on individual effectiveness and organizational performance both increase, and its typically neutral effect on friction tips beneficial. The mechanism is straightforward. In the absence of a clear stance, developers either underuse AI out of caution about data handling and IP exposure, or overuse it without regard to either, and both failure modes scale badly. A written policy covering permitted tools, expected behaviours, and the organization's position on experimentation removes the ambiguity and gives developers the cover they need to integrate AI into real work rather than guess at the edges.

Data context is where most organizations stall

Two of the seven capabilities concern data, and they should be read together. Healthy data ecosystems refers to whether internal data is high-quality, unified, and accessible. AI-accessible internal data measures whether AI tools are actually wired into those systems and using them as context. The first amplifies AI's organizational impact. The second amplifies benefits for individual effectiveness and code quality. Read together, the message is that generic foundation models deliver generic value, and company-specific context is what produces the gap between AI as autocomplete and AI as a real engineering capability. This requires engineering investment well beyond license procurement, which is where most organizations stall.

Engineering discipline gets more important, not less

Two more capabilities sit at the level of engineering practice. Strong version control, specifically commit frequency and rollback usage, amplifies AI's impact on individual and team performance, and this matters precisely because AI accelerates software development, but that acceleration can expose weaknesses downstream. Version control becomes the psychological safety net that lets teams experiment without fear of cascading breakage. Working in small batches, a long-standing DORA capability, amplifies AI's effect on product performance and reduces friction for development teams. There is an honest trade-off the report names openly. Small batches can slightly reduce AI's perceived individual effectiveness because AI is good at producing large outputs quickly, but the organizational return on small batches is larger and more sustainable. Speed at the individual level that produces instability at the system level is not a win, and the report's data on AI's negative relationship with software delivery stability backs that up.

Direction and infrastructure decide whether velocity matters

The last two capabilities sit at organizational design. User-centric focus, meaning how deeply teams connect their work to end-user outcomes, amplifies AI's positive effect on team performance, and the report carries an explicit warning that in the absence of a user-centric focus, AI adoption can have a negative impact on team performance. The logic is intuitive once stated. AI accelerates whatever direction you are already pointed, so a team aimed at the wrong outcome will reach the wrong outcome faster. Quality internal platforms, the seventh capability, provide the shared scalable substrate that lets AI value distribute across an organization rather than stay trapped in individual teams. 90% of organizations have adopted at least one platform and there is a direct correlation between a high quality internal platform and an organization's ability to realize the value of AI. Platforms can raise perceived friction through guardrails and governance, which is a deliberate trade for the consistency and compliance that make safe scaling possible.

What this means for funding decisions

The DORA findings push AI strategy out of the procurement column and into the platform and engineering capability columns. If the policy is unclear, fund clarity before tools. If internal data is fragmented and AI cannot reach it, fund the data work and the secure integration layer before expecting tool ROI. If version control discipline is weak and batches are large, those practices need investment before AI volume makes the existing weaknesses unmanageable. None of this is new advice for organizations already operating at a high level, but the report makes the cost of skipping it more concrete, because AI raises the stakes on both sides of whatever system it lands in. The 90% adoption number means the experiment phase is over. The differentiation now comes from the foundation underneath.

Sources

Google Cloud Blog, Announcing the 2025 DORA Report :

https://cloud.google.com/blog/products/ai-machine-learning/announcing-the-2025-dora-report

Google Cloud Blog, Introducing DORA's inaugural AI Capabilities Model :

DORA Research, Artificial Intelligence :

2025 DORA AI Capabilities Model (full PDF) :

https://services.google.com/fh/files/misc/2025_dora_ai_capabilities_model.pdf

2025 DORA State of AI-assisted Software Development Report (download) :

https://cloud.google.com/resources/content/2025-dora-ai-assisted-software-development-report

IT Revolution, AI's Mirror Effect: How the 2025 DORA Report Reveals Your Organization's True Capabilities :

Jehad Alkhateeb

AI & Digital Experience Architect with 11+ years of experience building intelligent systems and leading engineering teams. Based in Toronto, Canada.

More Articles

Ticketing Systems Are Infrastructure for Coding Agents

The bottleneck in long-running agent coding isn't the model or the prompts. It's the state management layer. Linear, OpenAI, and Anthropic's rumored Atlassian move all point to the same conclusion.

Code Is The Artifact. Specs Are The Asset.

How AI-augmented development is shifting what developers actually do, and why the next level isn't about writing code anymore.