AI Governance Part 2: What an AI Governance Framework Actually Covers

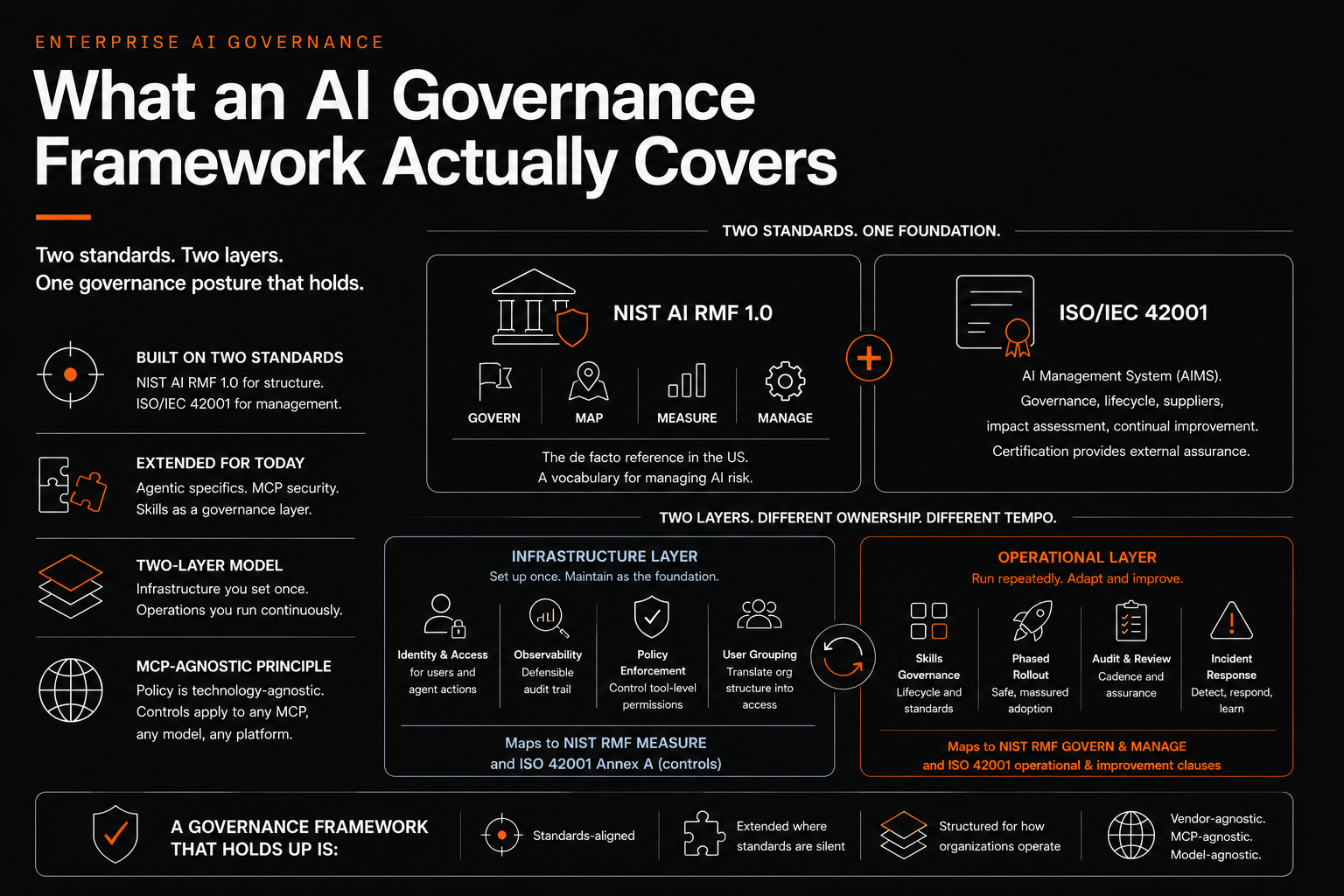

Part 2 of a series on enterprise AI governance. The standards you build on (NIST AI RMF, ISO 42001), the gaps they leave on agents and MCP, and the two-layer operational model that closes them.

Part 2 of a series on enterprise AI governance.

A working AI governance framework has two reference standards and three operational extensions. Most policy documents stop at the references, which is why they are unusable when someone asks whether a specific deployment can ship.

The two standards you build on

NIST AI RMF 1.0 organizes AI risk around four functions. GOVERN sets the culture, accountability, and policy environment. MAP contextualizes a specific AI system, identifying users, data flows, and context-specific risks. MEASURE provides quantitative and qualitative methods for tracking risk. MANAGE allocates resources to treat risks, documents residual risk, and responds to incidents. The RMF is voluntary, technology-agnostic, and structured as a vocabulary rather than a checklist. It is the de facto reference in the United States and the structural language federal agencies, financial institutions, and most large tech organizations use.

ISO/IEC 42001 is the international AI management system standard, in the same family as ISO 27001 for information security. It defines what a complete AI Management System (AIMS) consists of, including governance structures, AI impact assessment, lifecycle management, supplier oversight, and continual improvement. Certification provides external assurance, which matters in regulated procurement.

Most mature programs use both. NIST RMF for the conceptual scaffolding. ISO 42001 for the management system blueprint.

Where they go quiet

Three gaps the standards do not yet close.

Agentic specifics. Both NIST RMF 1.0 and ISO 42001 were drafted when generative AI was the dominant concern and agentic AI was a research topic. They tell you to identify, treat, and continuously improve. They do not tell you what observability looks like when an agent is composing tool calls in real time. The NIST Agentic Profile and OWASP Top 10 for Agentic Applications (December 2025) are starting to close this, but they are not yet enforceable management requirements.

Model Context Protocol. MCP did not exist when ISO 42001 was finalized and post-dates the NIST RMF by almost two years. The November 2025 arXiv paper Securing the Model Context Protocol: Risks, Controls, and Governance proposes a five-layer defense (authentication and scoped authorization, provenance tracking, sandboxing, inline policy enforcement, centralized governance through gateway architectures). This is the operational consensus emerging in 2026, but it is not codified.

Skills as a governance layer. ISO 42001 covers system lifecycle. It does not address skills explicitly. The OWASP Agentic Skills Top 10, published in early 2026, was one of the first standards to engage with the skill layer as a security concern. Most regulated programs will need to extend governance to cover skills because that is where practical control actually lives.

The two-layer operational model

What works across regulated enterprises is a two-layer model, structured around ownership and tempo.

The Infrastructure Layer is set up once, configured correctly, and maintained as the foundation. It covers identity and access for AI users and the agents acting on their behalf, the observability stack producing a defensible audit trail, the policy enforcement mechanisms that determine what an agent is allowed to do at the tool level, and the user grouping logic that translates organizational structure into access. This layer maps to NIST RMF MEASURE and to ISO 42001 Annex A technical controls.

The Operational Layer is everything that happens repeatedly over time. It covers the skills governance lifecycle, the phased rollout methodology, the audit and review cadences, and incident response. This layer maps to NIST RMF GOVERN and MANAGE and to the ISO 42001 operational and improvement clauses.

Infrastructure decisions are made by few people, are expensive to change, and need to be right before deployment volume scales. Operational decisions are made by many people, are made repeatedly, and need to be fast enough that governance does not become the bottleneck. These are different organizational problems and they tend to be owned by different groups. Conflating them produces frameworks that are slow at one tempo and unwieldy at the other.

The MCP-agnostic principle

A common scope error is writing the framework around a specific vendor or protocol. Teams produce a Copilot framework, or a Claude Enterprise framework, or a Salesforce Einstein framework, and rewrite the whole thing when the next AI capability arrives.

The framework should be technology-agnostic at the policy level and specific only at the configuration level. The infrastructure layer describes what observability you require, not which SIEM you use. The operational layer describes what skills governance looks like, not whether skills are implemented through Claude Skills, OpenAI custom GPTs, or a vendor-specific agent platform. The framework should be expressible in standards vocabulary, such that someone fluent in NIST RMF can read your governance documentation and recognize where you are on each of the four functions.

In particular, the framework should be MCP-agnostic. Today the conversation is Figma MCP, Sitecore MCP, and the handful of production MCPs early adopters are deploying. Tomorrow it is Slack MCP, GitHub MCP, internal API MCPs, third-party SaaS MCPs. If your framework only addresses today's MCPs, you will be repeating this exercise twelve months from now.

What the framework is silent on

A working framework is silent on three things on purpose.

AI ethics in the philosophical sense. There are legitimate conversations about appropriate use of AI in society, but the operational governance framework is not where those conversations live. The framework is about controls in place, not about whether a particular use case is the right one to pursue.

Model selection. Whether you standardize on Claude, GPT, Gemini, or multi-model is an architectural decision. The governance framework should hold any choice accountable to the same standards.

Use case approval at the application level. Whether marketing uses AI for campaign drafting or engineering uses AI for code review is a business decision the framework should make easier to evaluate but should not pre-decide. The framework provides the rails. Teams driving on those rails make the use case decisions within the constraints the framework establishes.

The combination of taking the standards seriously, extending them for agentic and MCP specifics, separating infrastructure from operations, and keeping the framework vendor-agnostic and model-agnostic is what produces a governance posture that holds up. The next post covers threat modeling, which is the analytic foundation everything else relies on.

Sources & References

Core standards

NIST AI Risk Management Framework: https://www.nist.gov/itl/ai-risk-management-framework

NIST AI RMF 1.0 publication (Tabassi, 2023): https://www.nist.gov/publications/artificial-intelligence-risk-management-framework-ai-rmf-10

ISO/IEC 42001:2023 AI Management System: https://www.iso.org/standard/42001

ISO official explainer on 42001: https://www.iso.org/home/insights-news/resources/iso-42001-explained-what-it-is.html

Agentic and MCP extensions

Cloud Security Alliance Agentic Profile for NIST AI RMF: https://labs.cloudsecurityalliance.org/agentic/agentic-nist-ai-rmf-profile-v1/

OWASP Top 10 for Agentic Applications announcement (December 2025): https://genai.owasp.org/2025/12/09/owasp-top-10-for-agentic-applications-the-benchmark-for-agentic-security-in-the-age-of-autonomous-ai/

OWASP Top 10 for Agentic Applications resource page: https://genai.owasp.org/resource/owasp-top-10-for-agentic-applications-for-2026/

OWASP Agentic Skills Top 10 (AST10): https://owasp.org/www-project-agentic-skills-top-10/

Errico et al., "Securing the Model Context Protocol: Risks, Controls, and Governance" (arXiv, November 2025): https://arxiv.org/abs/2511.20920

Additional reading

Deloitte on ISO 42001 and AI governance: https://www.deloitte.com/us/en/services/consulting/articles/iso-42001-standard-ai-governance-risk-management.html

Modulos complete guide to NIST AI RMF 1.0: https://docs.modulos.ai/frameworks/nist-ai-rmf/index

Jehad Alkhateeb

AI & Digital Experience Architect with 11+ years of experience building intelligent systems and leading engineering teams. Based in Toronto, Canada.

Related Articles

AI Governance Part 1: Why Enterprise AI Governance Cannot Wait

Part 1 of a series on enterprise AI governance. The risks are no longer theoretical. Why governance has to happen now, with the incident data to prove it.

AI Governance Part 3: Threat Modeling AI Agents in the Enterprise

Part 3 of a series on enterprise AI governance. Threat modeling using OWASP LLM Top 10, OWASP Agentic Top 10, MITRE ATLAS, and MCP-specific defense patterns to model real risk.